Michael D. Moberly, Principal, Founder kpstrat

My introduction to machine learning occurred years ago while developing and teaching university (security) courses which embraced principles – concepts of CPTED (crime prevention through environmental design). 148313NCJRS.pdf (ojp.gov) Microsoft Word – Crowe NCPI CPTED.doc (seattleu.edu)

We sought to ‘program architecturally nuanced principles of CPTED into a CAD (computer aided design), ala machine learning, in collaboration with a professor architecture,

The principles of CPTED include designing ‘built environments’ to mitigate, if not prevent crime, and the fear of crime, by creating…

- stronger senses of personal territoriality, and

- positive behaviors associated with that territoriality.

- which are likely to deter – inhibit the presence of bad actors.

- attractive ‘feel good – feel safe’ (intangible) benefits to/for users of those environments.

- thereby, minimizing a ‘built environments’ receptivity, vulnerability, probability, and even criticality to/for criminal activity which, if-when sustained, would become attractive and valuable intangible assets.

Oscar Newman authored (1972) a strong critique of the design of America’s public housing environments, which he described as crowded, limited space, high rise structures which rapidly morphed into havens for bad actors, criminal activity, and consistent fear by residents.

CPTED’s ‘built environment’ principles include rational choice – routine activity theory which affect ‘opportunity’ for criminal activities to occur, ala,

- reducing resident anonymity.

- increasing the sense of natural surveillance.

- creating environments less supportive – receptive to undesirable behavior and/or criminal activity.

Some criminologists were not enamored with CPTED, suggesting it could displace crime.

CPTED delivers intangible assets…through its focus on effective design and (peoples) use of the built (tangible, physical, fixed) environment in a manner that mitigate users fear – expectation of crime within their territory.

CPTED’s primary objective being to…reduce-remove the opportunity for crime to occur in particular (built) environments and promote favorable interactions of legitimate users and/or residents. (Adapted by Michael D. Moberly from Greater Manchester, UK ‘Design for Security’).

I was fortunate to collaborate with a forward-looking professor of architecture (Jon Davey). Our collaboration focused on (introducing) programming CPTED principles in ‘computer aided design’ (CAD) systems, ala large, desktop computers. This was an early version-form of ‘machine learning’ albeit rules-based (computer) programming.

This collaboration sought to accomplish, and did so with varying degrees of success, was to ‘program architecturally nuanced principles of CPTED into a CAD machine. The machine (computer) in turn, would alert CAD users (designers of built environments) when a particular-design feature breached a CPTED principle. A ‘prompt’ would appear on the machine’s screen awaiting (architectural) modification or override as the circumstance and/or (design) warranted.

We respectfully described these CAD prompts as either ‘good or bad CPTED’.

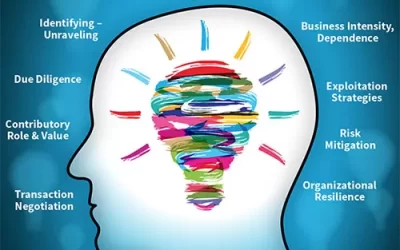

The Business Intangible Asset Blog was created in 2006 and now includes 1100+ topic specific posts intended to provide readers, ala business leaders, management teams, R&D administrators, boards, and investors, etc., with reliable insights to the application, valuation, competitive advantage, revenue generation capability, and resilience-sustainability of intangible assets designated as ‘mission essential’.

Posts at Business Intangible Asset Blog are intended to (a.) draw attention to and guide the development, application, management, safeguards, and risk mitigation to intangible assets designated as ‘mission essential’, (b.) describe how, when, where intangible assets contribute to – enhancing valuation, competitiveness, brand, reputation, revenue generation, operating culture, resilience, and sustainability.